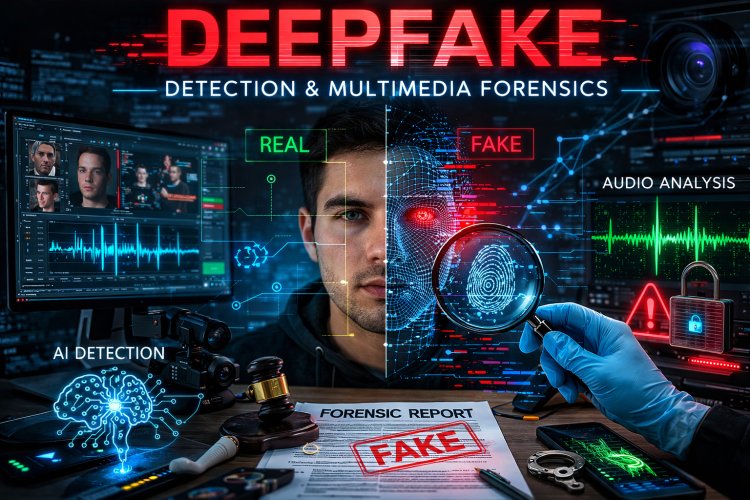

Deepfake Detection and Multimedia Forensics

Deepfake Detection and Multimedia Forensics is a field of digital forensics that focuses on identifying manipulated or fake multimedia content such as images, videos, and audio created using artificial intelligence (AI). Deepfakes use deep learning algorithms, especially Generative Adversarial Networks (GANs), to generate highly realistic fake media that can replace faces, voices, or entire scenes. This area of forensics aims to detect, analyze, and verify the authenticity of multimedia evidence to prevent misinformation, fraud, and cybercrime.

1. What is a Deepfake?

A deepfake is a type of synthetic media created using AI-based deep learning techniques that manipulate or generate realistic images, videos, or audio.

The word “deepfake” comes from:

-

Deep Learning

-

Fake Media

Deepfakes can:

-

Replace a person’s face in a video

-

Imitate someone’s voice

-

Create fake speeches

-

Alter images realistically

Example:

A video showing a person saying something they never actually said.

2. What is Multimedia Forensics?

Multimedia Forensics is the scientific analysis of multimedia data to determine:

-

Authenticity of images, videos, and audio

-

Source of media

-

Evidence of manipulation or tampering

-

Timeline of creation

It helps investigators determine whether media content is genuine or altered.

Types of multimedia examined:

-

Digital images

-

Videos

-

Audio recordings

-

Social media media files

-

Surveillance footage

3. Technologies Used to Create Deepfakes

1. Generative Adversarial Networks (GANs)

GANs consist of two neural networks:

Generator

-

Creates fake images or videos

Discriminator

-

Detects whether the content is real or fake

These two networks compete, improving the realism of the fake content.

2. Autoencoders

Autoencoders are neural networks used to learn facial features and recreate them in another video.

They are commonly used in face swapping.

3. Face Swapping Algorithms

These algorithms replace a person’s face with another face in a video using AI-based mapping.

4. Voice Cloning

AI models can replicate a person's voice by analyzing small voice samples.

This allows criminals to generate fake audio messages or calls.

4. Types of Deepfakes

Face Replacement

Replacing a person's face with another face in a video.

Facial Expression Manipulation

Changing facial expressions while keeping the same identity.

Voice Deepfakes

Creating synthetic audio that mimics someone's voice.

Full Body Deepfakes

Generating entire fake videos of people performing actions.

5. Why Deepfake Detection is Important

Deepfakes pose serious risks:

Misinformation

Fake political speeches or news videos.

Identity Theft

Impersonating individuals for fraud.

Cybercrime

Fake voice calls for financial scams.

Reputation Damage

Fake videos used for harassment or defamation.

Legal Evidence Tampering

Fake videos used in court cases.

Deepfake detection ensures trust in digital media and legal evidence.

6. Deepfake Detection Techniques

1. Visual Artifact Detection

Deepfake videos often contain visual inconsistencies such as:

-

Unnatural blinking

-

Distorted facial edges

-

Lighting inconsistencies

-

Blurry regions

Investigators analyze these artifacts.

2. Facial Landmark Analysis

Algorithms analyze facial landmarks such as:

-

Eye movement

-

Lip synchronization

-

Head movement

Deepfake models sometimes fail to reproduce natural human behavior.

3. Frequency Domain Analysis

Fake images often leave patterns in the frequency spectrum.

Forensic tools analyze image frequency patterns to detect manipulation.

4. Biological Signal Detection

Real videos contain natural signals like:

-

Heartbeat changes in skin color

-

Micro facial movements

Deepfakes may fail to replicate these signals accurately.

5. AI-Based Detection

Machine learning models are trained to distinguish between real and fake media.

Common algorithms:

-

Convolutional Neural Networks (CNN)

-

Deep neural networks

-

Transformer-based models

7. Multimedia Forensics Investigation Process

Step 1: Evidence Collection

Collect the image, video, or audio file.

Step 2: Metadata Analysis

Check file metadata such as:

-

Creation date

-

Device information

-

Software used

Step 3: Content Analysis

Analyze:

-

Visual artifacts

-

Compression patterns

-

Pixel inconsistencies

Step 4: AI Detection

Use deep learning models to detect deepfake characteristics.

Step 5: Reporting

Document the findings in a forensic report.

8. Tools Used in Deepfake and Multimedia Forensics

Common tools include:

| Tool | Purpose |

|---|---|

| Deepware Scanner | Detect deepfake videos |

| Sensity AI | Deepfake detection platform |

| Forensically | Image forensic analysis |

| Amped Authenticate | Image authentication |

| Video Authenticator | Video integrity verification |

9. Challenges in Deepfake Detection

Rapidly Improving AI

Deepfake technology is improving faster than detection methods.

High Quality Deepfakes

Modern deepfakes are extremely realistic.

Lack of Detection Standards

No universal standards for deepfake verification.

Large Data Volume

Huge amount of multimedia content online makes detection difficult.

10. Real World Applications

Law Enforcement

Detect fake evidence in criminal investigations.

Social Media Platforms

Identify manipulated content.

Journalism

Verify authenticity of news media.

National Security

Detect propaganda and misinformation.

Cybersecurity

Prevent AI-based fraud and scams.

Follow cyberdeepakyadav.com on

Facebook, Twitter, LinkedIn, Instagram, and YouTube

What's Your Reaction?